by Lisa Bowers, The Open University

Introduction

For creative practitioners, who prefer to engage with mediums in a more ‘hands-on’ approach, the exclusive use of computer-aided design (CAD) software, primarily focused on sight interactions without touch, can feel like an impoverished sensory experience. Haptic feedback using technology that harnesses touch perception has the potential to allow users to feel more engaged and immersed in their personal creative projects. One group in the maker community that could particularly benefit from the affordances of haptic tooling is the sight-impaired (Minogue et al, 2006). In the absence of tools that exploit touch and sound, many makers within the sight-impaired community feel there are serious barriers to access in the use of online or digital computer-aided design (CAD) systems.

Past studies of haptics in the creative industries have focused on the output and efficacy of haptics; in this essay we present a study that focuses on user-centred experiences of haptics – in this case a tool designed for drawing via touch and sound. The study was undertaken by research staff at The Open University collaborating with the HAPPIE consortia, using Audience of the Future (AotF) funding. Working closely with RNIB staff and service users, we explored the affordances of virtual haptic and sonified tooling specifically designed for sight-impaired maker communities. The study found that the users could exploit their sensory perceptions within the virtual space and that they enjoyed and could readily perceive the drawings they created.

Background: blind drawing

John M. Kennedy (1996) asked the question ‘Can blind people draw, and can they understand what they draw?’. Kennedy went on to explore how people without sight draw: whether from physical objects, or their environment, or from their own mental pictures. Kennedy established that sighted and non-sighted people hold similar mental pictures (Kennedy et al., 2003).

Some people with sight-impairments create analogue drawings using just their picture memories and use a variety of analogue tools which make an embossed/debossed line. However, this process does not allow for any edit or error correction. Thus, analogue drawing can place some barriers to sight-impaired users’ creative agency or efficacy of drawing.

“HAPPIE” (Haptic Authoring Pipeline for the Production of Immersive Experiences)

The HAPPIE group is a collaborative consortium of expert academic and commercial practitioners working together to building cutting edge tools to engage with and transform how underrepresented groups in society experience the virtual realm. The OU joined the HAPPIE consortia in 2019. The aim of the collaborative group is to review how to make haptics more ubiquitous and to design haptic systems to enable a wide spectrum of users to feel more immersed within virtual space. The group feels that as we go forwards in human engagements with technology, the breadth and depth of immersive applications are restricted without the use of more sensory perceptions, such as touch and sound.

Why Haptics?

As there has been relatively little research conducted on user-experiences of haptic tooling and even less for sight-impaired user creative experiences of touch tooling, we elected to design a series of user studies to evaluate users’ perceptions of haptics as well as exploring their experiences. We were also interested in the extent to which touch stimulation and sonified cues could support giving sight-impaired users greater access to computer-aided design (CAD). Using qualitative user studies, we tasked users to complete various stipulated and free drawing exercises using a haptic interface with sonification to enhance information about location and connection. The aim was to understand how sensory enhancement might affect sight-impaired users and how richer sensory perceptions might influence the users personal drawing style.

Drawing on drawing study (analogue to virtual)

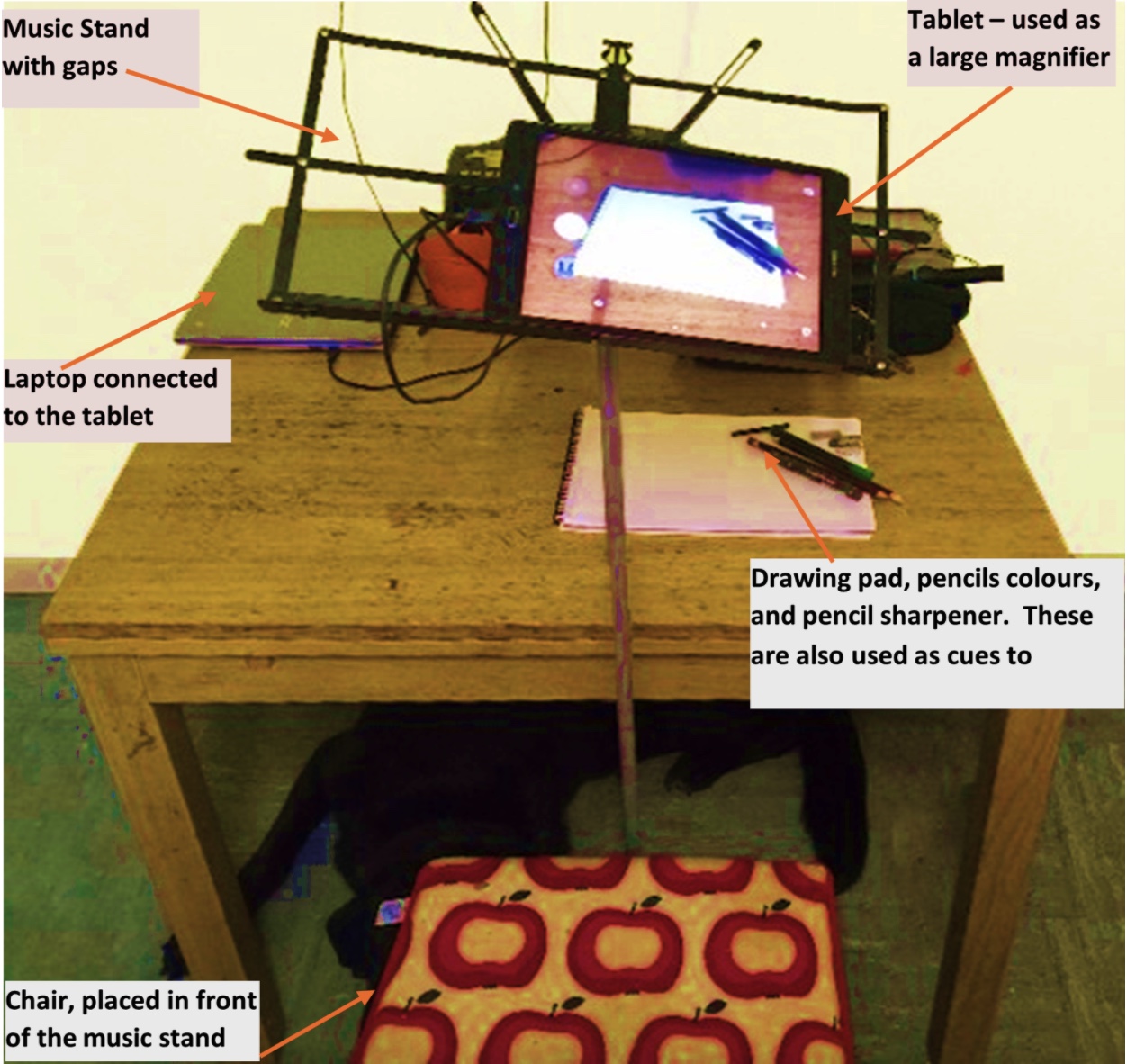

The user group shared with the team a variety of adapted tool used for drawing, and ‘drawing hacks’ previously used to adapt their needs and help to complete particular drawing tasks (see Figure 1). Users were initially requested to give the research team a technical ‘wish-list’ of technology assistances which they felt would complement their personal drawing abilities and styles.

Due to Covid-19, the user studies were adapted to be conducted remotely. Participants were provided with a ‘capsule tool kit’, which included a multi-staged haptic device and interface system specifically designed for drawing. The research facilitators kept in continuous contact with users via Skype through the testing period. The users’ progress was observed, checked and recorded via screen share.

More specifically, the study aimed to address the following research question:

Can a commercial ‘off the shelf’ haptic device, combined with specialised middleware, be used by sight- impaired creative makers to draw 2D linear images?

In particular, the aims of the study were:

- To establish what current analogue tools were used by the user group to plan and execute drawings.

- To test the boundaries of technology arrangements working with sight impaired users regarded as ‘experts’.

- To observe how people with sight impairments experience drawing with a touch/audio-led system.

With the research question and aims in mind we conducted a two-hour remote online user-study with each user (n=5). Users were asked to embark on three separate drawing tasks. The drawing images requested two preselected pictures (a geometric shape and a house) and one drawing of choice.

The system provided to participants used haptic middleware called Toia ™ to give new haptic facilities to the Unreal Engine (a well-known virtual world creation tool). Participants controlled the system via a physical haptic device – the Novint Falcon™. The Falcon is a desktop haptic robotic device that allows the user to ‘feel’ physical bi-directional force in a digital world. While controlling this with their dominant hand, users were asked to use their non-dominant hand to interact with a simple ‘clicker’ device, which the user tapped to create new points in a virtual space, as part of a dot-to-dot drawing style in the software (See Figure 2 for setup).

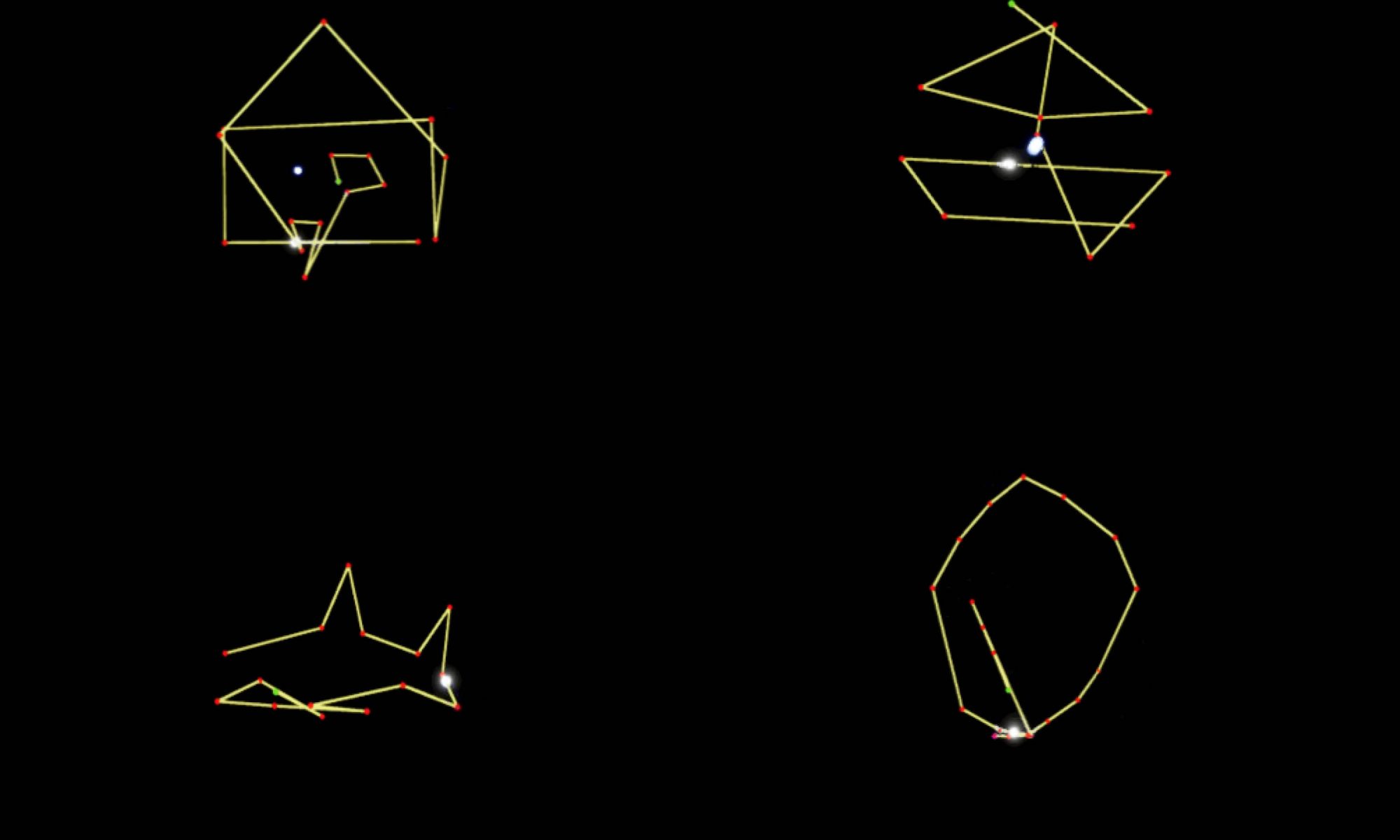

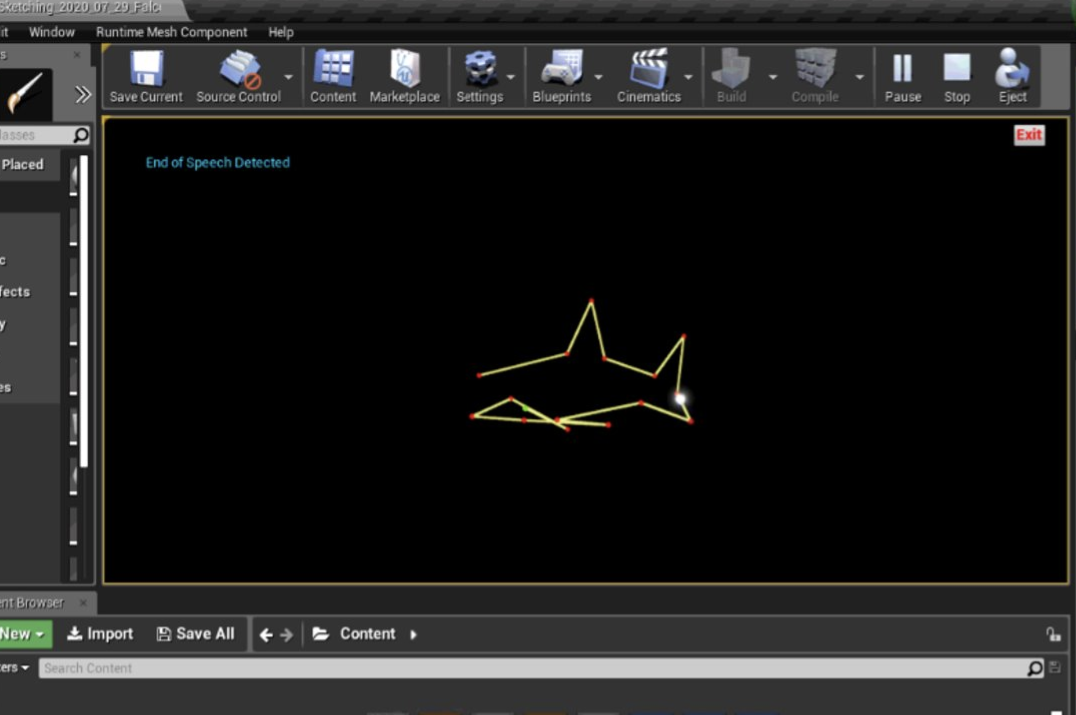

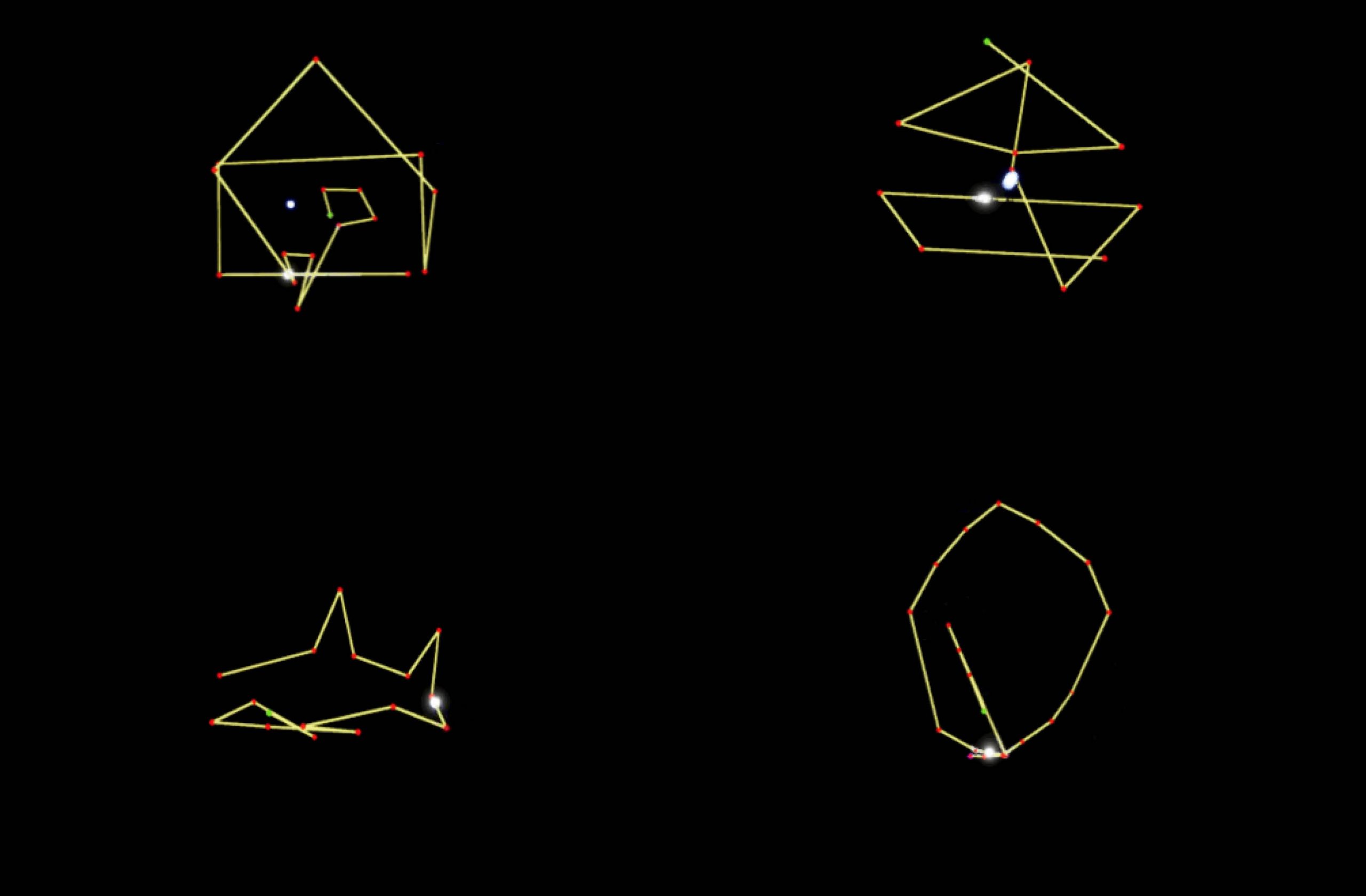

Each participant embraced the technology by exploring how they could draw in the digital space. This involved experimentation but generally they drew something they held in their mind as a clear mental image. This ranged from a shark, houses (a set task) and a challenge to test how accurately they could draw and connect a circle back together (see Figure 3). Using the technology users were able to experience both free drawing and tracing or re-reading (aka feeling their drawings on replay) to remind them what had been drawn. This was actioned via a switchable haptic track and trace mode.

The participants also took the opportunity to give feedback to the research team about how they perceived improvements to the system. This included giving more sound assistance for screen navigation, and to include options like an ‘undo’ button to edit drawings at will.

Discussion

Current digital drawing tools generally lack intuitive useable sensory feedback. Analogue drawing tools, although tactile in nature, don’t offer sight-impaired artists an editable option to draw on-the-fly. This study highlighted that there is a requirement for a touch-sound assisted drawing tool to aid sight-impaired users’ access to digital creative tooling. From the study we found that the haptic tool can provide the following affordances to assure sight-impaired users greater access to digital tooling:

- A bi-directional force feedback tool can afford sight-impaired users to create 2D pictures and gain an accurate mental image.

- A user-centred haptic system with sound cues can aid users understanding of orientation in virtual space.

- Track and trace options can aid users’ memorability of picture shape.

Focused users’ suggestions for the next iteration of the drawing tool included:

- The addition use of realistic drawing sounds e.g., sounds which could be said to be specifically connected to drawing e.g., the sound of the pencil dragging on textured paper.

- Additional ‘non-speech’ (e.g., ‘beep’ or ‘ping’) sounds to overcome users screen navigation.

- Opportunistic personalised settings on the haptic device stylus to increase users’ freedom of use and creativity.

- The potential for an auto-close cue to complete partially drawn shapes.

What next?

The concept of touching and creating pictures via touch and sound, for people with sight-impairments has been a revelation to the RNIB user group within this study. The OU research and HAPPIE consortia now aim to expand the building tool concept to a larger concept to build a studio space. Once again working closely with RNIB participants, we would like to develop a virtual creative space with 2D/3D tooling systems. This type of space will become an immersive multi-sensory realm and holds the potential to alter how we perceive the virtual and physical realm. Watch this space for expanded future sensory tooling to enhance digital space and sound.

August 2021